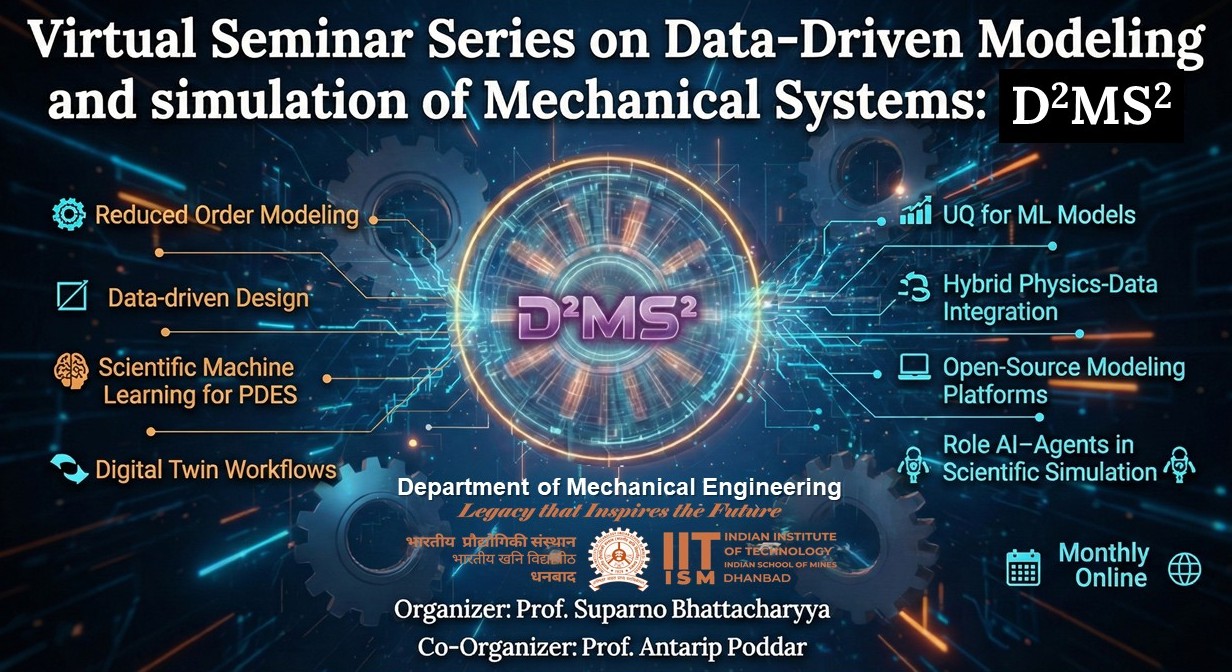

Data-Driven Modeling and Simulation of Mechanical Systems(D²MS²) Virtual Seminar Series

About the Series

D²MS² is a monthly virtual seminar series hosted by the Department of Mechanical Engineering, IIT (ISM) Dhanbad.

We explore the frontiers of data-informed and physics-based modeling for mechanical, fluid, and thermal systems.

Organized by: Department of Mechanical Engineering

Institution: Indian Institute of Technology (Indian School of Mines), Dhanbad

Core Themes

- 🔹 Reduced-order modeling

- 🔹 Scientific ML for PDEs

- 🔹 Digital twins

- 🔹 Hybrid physics–data methods

- 🔹 UQ for ML

- 🔹 Open-source platforms

- 🔹 AI agents for simulation

🎙️ Upcoming Talk

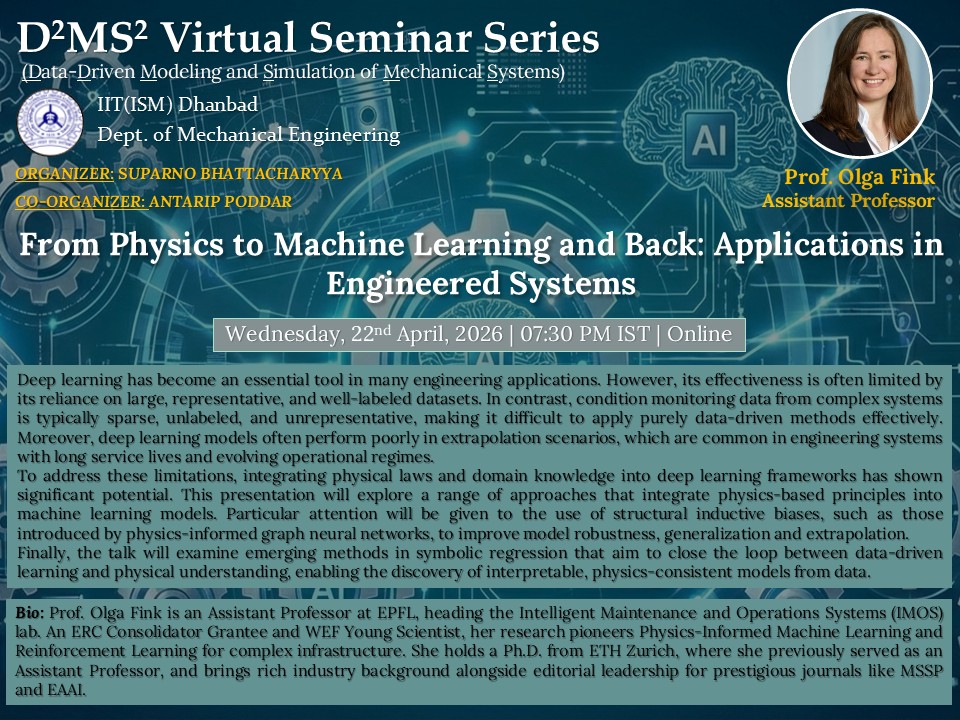

📅 April 22, 2026 | 🕢 07:30 PM IST

Prof. Olga Fink

From Physics to Machine Learning and Back: Applications in Engineered Systems

Abstract

Deep learning has become an essential tool in many engineering applications. However, its effectiveness is often limited by its reliance on large, representative, and well-labeled datasets. In contrast, condition monitoring data from complex systems is typically sparse, unlabeled, and unrepresentative, making it difficult to apply purely data-driven methods effectively. Moreover, deep learning models often perform poorly in extrapolation scenarios, which are common in engineering systems with long service lives and evolving operational regimes. To address these limitations, integrating physical laws and domain knowledge into deep learning frameworks has shown significant potential. This presentation will explore a range of approaches that integrate physics-based principles into machine learning models. Particular attention will be given to the use of structural inductive biases, such as those introduced by physics-informed graph neural networks, to improve model robustness, generalization and extrapolation. Finally, the talk will examine emerging methods in symbolic regression that aim to close the loop between data-driven learning and physical understanding, enabling the discovery of interpretable, physics-consistent models from data.

Bio: Olga Fink has been assistant professor at EPFL since March 2022, heading the Intelligent Maintenance and Operations Systems (IMOS) laboratory. She is the recipient of an ERC Consolidator Grant. Olga’s research focuses on Physics-Informed Machine Learning, Multi-Modal Learning, Domain Adaptation and Generalization, and Reinforcement Learning for Intelligent Maintenance and Operations of Infrastructure and Complex Assets. Before joining EPFL faculty, Olga was assistant professor of intelligent maintenance systems at ETH Zurich from 2018 to 2022, being awarded the prestigious professorship grant of the Swiss National Science Foundation (SNSF). Between 2014 and 2018 she was heading the research group “Smart Maintenance” at the Zurich University of Applied Sciences (ZHAW). Olga received her Ph.D. degree from ETH Zurich, and Diploma degree from Hamburg University of Technology. She has gained valuable industrial experience as reliability engineer with Stadler Bussnang AG and as reliability and maintenance expert with Pöyry Switzerland Ltd. Olga is serving as an editorial board member of several prestigious journals, including Mechanical Systems and Signal Processing, Engineering Applications of Artificial Intelligence and Reliability Engineering and System Safety. In 2019, Olga earned the distinction of being recognized as a young scientist of the World Economic Forum. In 2020, 2021, and 2024 she was honored as a young scientist of the World Laureate Forum. In 2023, she was distinguished as a fellow by the Prognostics and Health Management Society.

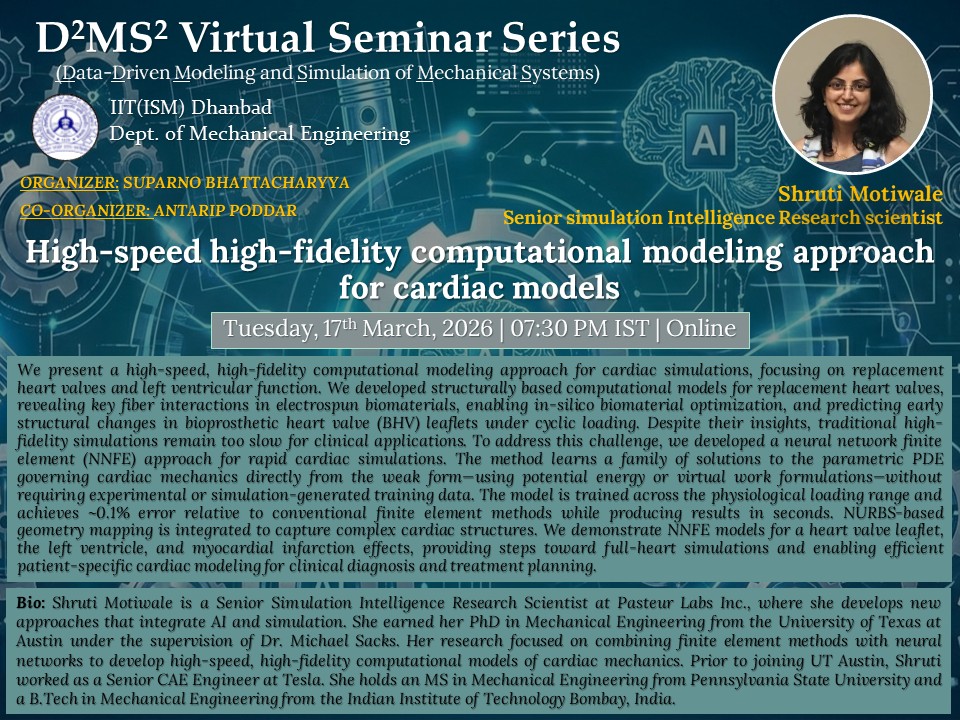

📅 March 17, 2026 | 🕢 07:30 PM IST

Dr. Shruti Motiwale

High-speed high-fidelity computational modeling approach for cardiac models

Abstract

We present a high-speed, high-fidelity computational modeling approach for cardiac simulations, focusing on replacement heart valves and left ventricular function. We developed structurally based computational models for replacement heart valves, revealing key fiber interactions in electrospun biomaterials, enabling in-silico biomaterial optimization, and predicting early structural changes in bioprosthetic heart valve (BHV) leaflets under cyclic loading. Despite their insights, traditional high-fidelity simulations remain too slow for clinical applications. To address this challenge, we developed a neural network finite element (NNFE) approach for rapid cardiac simulations. The method learns a family of solutions to the parametric PDE governing cardiac mechanics directly from the weak form—using potential energy or virtual work formulations—without requiring experimental or simulation-generated training data. The model is trained across the physiological loading range and achieves ~0.1% error relative to conventional finite element methods while producing results in seconds. NURBS-based geometry mapping is integrated to capture complex cardiac structures. We demonstrate NNFE models for a heart valve leaflet, the left ventricle, and myocardial infarction effects, providing steps toward full-heart simulations and enabling efficient patient-specific cardiac modeling for clinical diagnosis and treatment planning.

Bio: Shruti Motiwale is a Senior Simulation Intelligence Research Scientist at Pasteur Labs Inc., where she develops new approaches that integrate AI and simulation. She earned her PhD in Mechanical Engineering from the University of Texas at Austin under the supervision of Dr. Michael Sacks. Her research focused on combining finite element methods with neural networks to develop high-speed, high-fidelity computational models of cardiac mechanics. Prior to joining UT Austin, Shruti worked as a Senior CAE Engineer at Tesla. She holds an MS in Mechanical Engineering from Pennsylvania State University and a B.Tech in Mechanical Engineering from the Indian Institute of Technology Bombay, India.

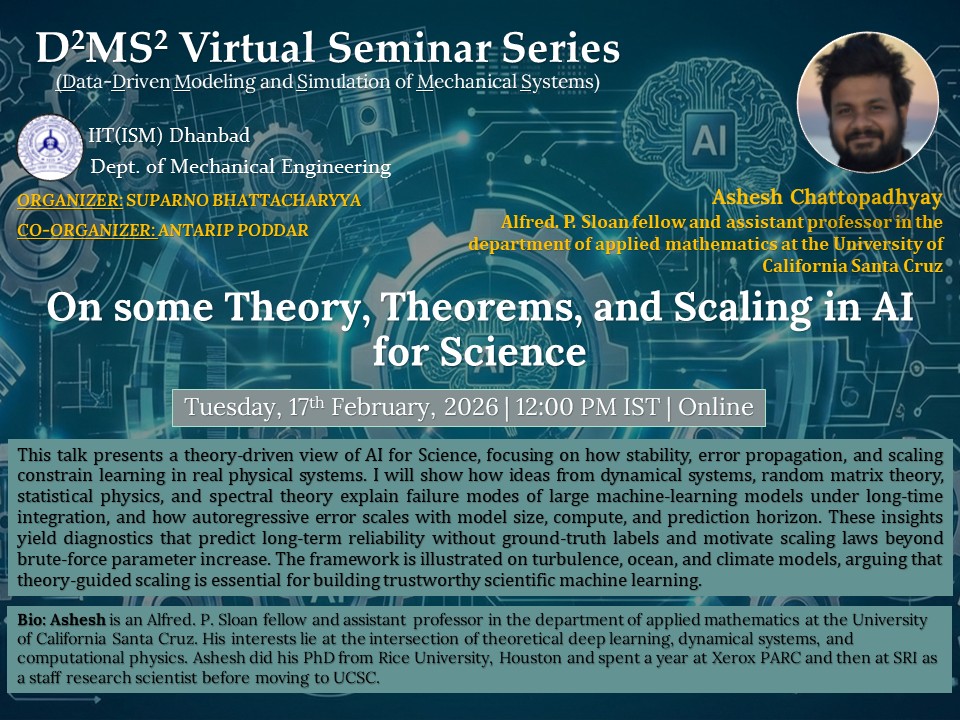

📅 February 17, 2026 | 🕢 12:00 PM IST

Prof. Ashesh Chattopadhyay

On some Theory, Theorems, and Scaling in AI for Science

Abstract

This talk presents a theory-driven view of AI for Science, focusing on how stability, error propagation, and scaling constrain learning in real physical systems. I will show how ideas from dynamical systems, random matrix theory, statistical physics, and spectral theory explain failure modes of large machine-learning models under long-time integration, and how autoregressive error scales with model size, compute, and prediction horizon. These insights yield diagnostics that predict long-term reliability without ground-truth labels and motivate scaling laws beyond brute-force parameter increase. The framework is illustrated on turbulence, ocean, and climate models, arguing that theory-guided scaling is essential for building trustworthy scientific machine learning.

Bio: Ashesh is an Alfred. P. Sloan fellow and assistant professor in the department of applied mathematics at the University of California Santa Cruz. His interests lie at the intersection of theoretical deep learning, dynamical systems, and computational physics. Ashesh did his PhD from Rice University, Houston and spent a year at Xerox PARC and then at SRI as a staff research scientist before moving to UCSC.

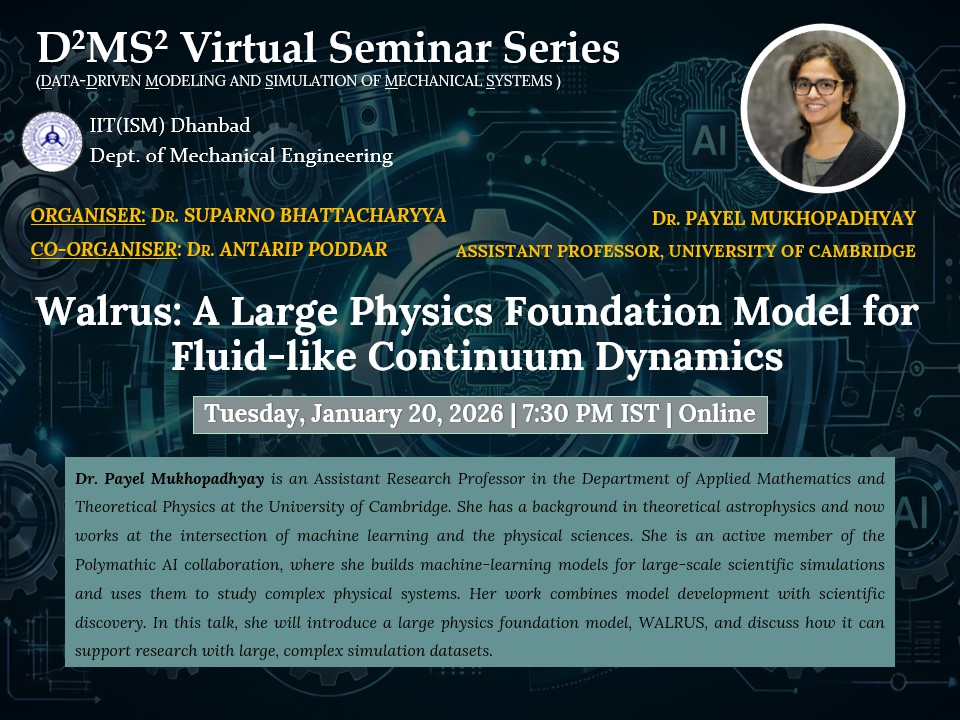

📅 January 20, 2026 | 🕢 7:30 PM IST

Dr. Payel Mukhopadhyay

Assistant Research Professor

University of Cambridge

Walrus: A Large Physics Foundation Model for Fluid-Like Continuum Dynamics

Abstract

Foundation models have transformed machine learning for language and vision, but achieving comparable impact in physical simulation remains a challenge. Data heterogeneity and unstable long-term dynamics inhibit learning from sufficiently diverse dynamics, while varying resolutions and dimensionalities challenge efficient training on modern hardware. Through empirical and theoretical analysis, we incorporate new approaches to mitigate these obstacles, including a harmonic-analysis-based stabilization method, load-balanced distributed 2D and 3D training strategies, and compute-adaptive tokenization. Using these tools, we develop Walrus, a transformer-based foundation model developed primarily for fluid-like continuum dynamics. Walrus is pretrained on nineteen diverse scenarios spanning astrophysics, geoscience, rheology, plasma physics, acoustics, and classical fluids. Experiments show that Walrus outperforms prior foundation models on both short and long term prediction horizons on downstream tasks and across the breadth of pretraining data, while ablation studies confirm the value of our contributions to forecast stability, training throughput, and transfer performance over conventional approaches.

👥 Organizers

Dr. Suparno Bhattacharyya

Organizer

Dr. Antarip Poddar

Co-Organizer

For queries, please contact the organizers at the Department of Mechanical Engineering, IIT (ISM) Dhanbad.